The problem was never knowing good principles. It was applying them consistently. AI agents change that equation — not just for individuals, but for nonprofits, communities, and any organization that wants to operate near its full potential.

The Gap Between Knowing and Doing

Charlie Munger spent decades compiling his “latticework of mental models” — first principles, inversion thinking, the Pareto principle, feedback loops. He became one of the most effective thinkers of the 20th century by systematically applying them. His secret? He didn’t just know these principles. He practiced them, obsessively, on every decision, every day, for decades.

Most of us know this story. And most of us also know the humbling reality: we read the same books, nod our heads at the same principles, and then — under pressure, distraction, fatigue, or the fog of a hundred competing priorities — we fall back on gut feel, social proof, or the loudest voice in the room.

This is not a character flaw. It is a cognitive architecture problem.

The human brain is not optimized for consistent, principled reasoning. It is optimized for pattern recognition, social cohesion, and short-term survival. Systematic thinking — evidence gathering before hypothesis, root cause before symptom, inversion before action — requires deliberate effort that depletes with every decision we make throughout the day.

This is why the most effective individuals and organizations in history have developed external systems to enforce good thinking: checklists, postmortems, pre-mortems, double-entry bookkeeping, peer review, constitution-level laws. Every enduring institution is, at its core, a system for making consistent application of good principles structurally mandatory rather than personally optional.

We are now entering an era where AI agents can be that system — but operating at the speed of software, the scale of a team, and the consistency of a machine.

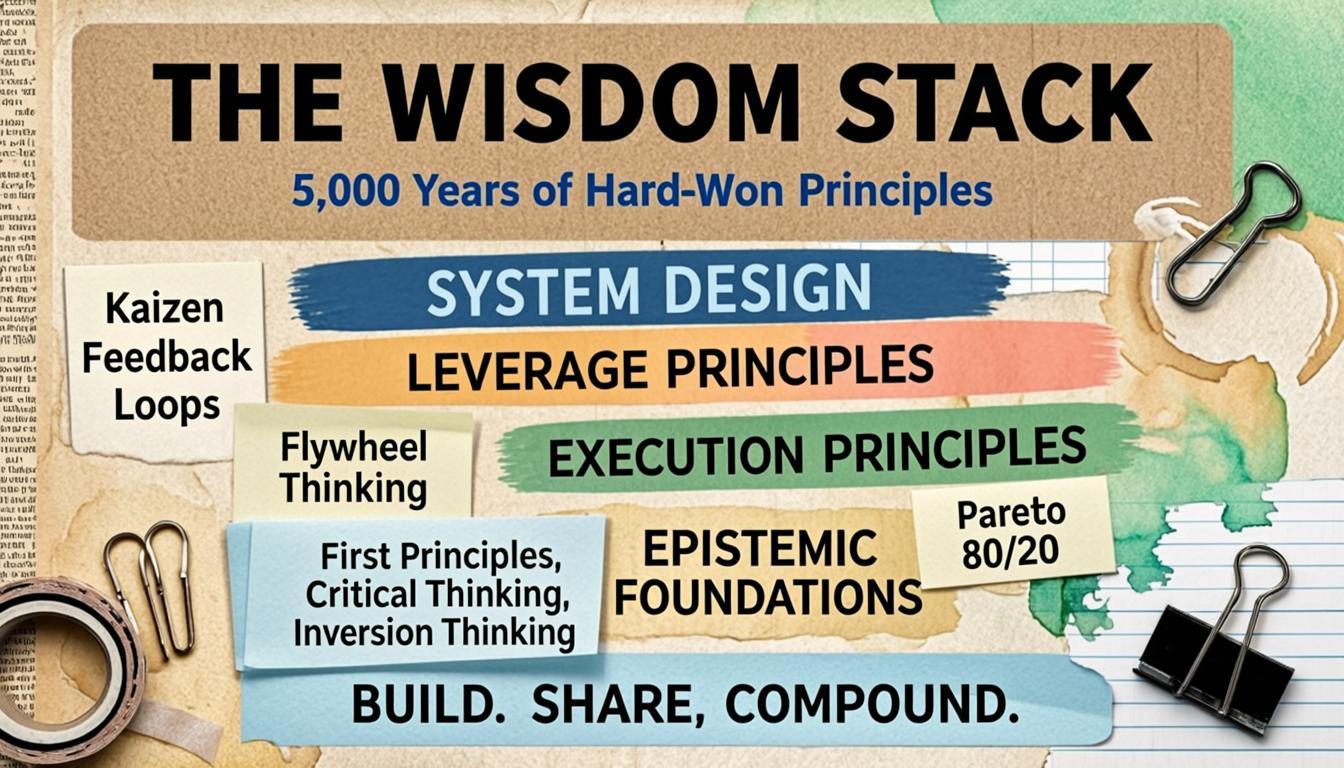

The Wisdom Stack: 5,000 Years of Hard-Won Principles

Across philosophy, science, business, and military strategy, humanity has converged on a set of high-leverage principles. They come from different eras and cultures, but they rhyme across domains. Let’s call this the Wisdom Stack — layered from how we think, to how we act, to how we build systems that improve themselves.

Layer 1: Epistemic Foundations — How to Think

First Principles Thinking. Don’t accept inherited assumptions. Strip back to bedrock truth and reason forward. Aristotle formalized it. Elon Musk made it famous again. Its power: it breaks you out of local optima by questioning the frame itself.

Critical Thinking. Challenge your own premises before others challenge them. Distinguish correlation from causation. Recognize cognitive biases before they recruit you. The ancient Socratic method was nothing but structured critical thinking applied out loud.

Evidence-Based Investigation. No conclusion without evidence from the actual world — not from memory, not from assumption, not from what seemed true last week. Science codified this. Medicine bet lives on it. Good investigators never assert “X doesn’t exist” without exhaustive search.

Inversion Thinking. Charlie Munger’s favorite tool: ask “what would guarantee failure?” then systematically avoid those things. When two paths look equal, choose the one that avoids the worst downside. Most people optimize for best-case. The wisest optimize by eliminating catastrophe first.

Layer 2: Execution Principles — How to Act

Root Cause, Not Symptom. Fix the protocol that allowed a failure, not the failure itself. A patch is not a solution. A solution changes the design so the problem cannot recur. Treating symptoms is the most common form of expensive organizational busy-work.

Audit Trails. What is not recorded does not survive. Every significant decision, change, and outcome should leave a durable, queryable trace. This is how organizations learn without losing knowledge when people leave. It is also how trust is built — in systems and between humans.

Metrics of Success. “Done” is not a feeling. Before starting any task, define what the verifiable done-state looks like. This sounds obvious. It is practiced by almost no one in daily work. The absence of this principle is why most projects drift.

Verify Before Delivering. Never say “it’s ready” without checking yourself first. Open the preview. Run the test. Curl the URL. This is a respect principle as much as a quality principle — the recipient’s time is not for debugging sloppy handoffs.

Layer 3: Leverage Principles — How to Scale Impact

Flywheel Thinking. Jim Collins identified that great companies don’t have a single dramatic breakthrough — they have a flywheel: each turn makes the next turn easier, compounding into unstoppable momentum. The same applies to individuals and teams. What is your flywheel? What investments today make tomorrow’s work structurally easier?

The Pareto Principle. 20% of inputs produce 80% of outcomes. This is not a cute heuristic — it is a structural property of complex systems. The skill is identifying which 20% to focus on before committing the 80% of effort that produces marginal returns.

Skin in the Game. Nassim Taleb’s insight: advice that carries no cost to the giver is structurally unreliable. The best decision-makers stake something on their recommendations. For agents and advisors, this translates to: be explicit about confidence levels. Never let confident language smuggle in uncertain claims.

Layer 4: System Design — How to Build Organizations That Improve

Short Feedback Loops. Fast feedback beats slow feedback. The most effective learning systems compress the gap between action and consequence. Postmortems, heartbeat checks, daily reviews — these are all mechanisms for tightening the feedback loop.

Chesterton’s Fence. Before changing or removing something, understand why it was put there. The fence that looks unnecessary usually solved a problem that is now invisible. Destroying it reintroduces the original problem at the worst possible moment.

Separation of Concerns. Each component of a system should have one clear responsibility. When concerns tangle, debugging becomes archaeology, failures become mysterious, and improvements break unrelated things.

Kaizen — Compounding Improvement. Small, consistent improvements to a system compound into massive advantages over time. The Toyota production system was not built in a day. It was built by workers who, every single day, were empowered and expected to improve one small thing.

The Dream: What Near-Optimal Would Feel Like

Imagine, for a moment, what it would feel like to actually apply all of these principles consistently:

Every decision starts with the right question: what is the irreducible truth here? Before acting, you invert: what would guarantee failure, and am I doing any of those things? Every action leaves a trace. Every project has explicit success criteria. Every failure produces a postmortem that improves the underlying protocol, not just the symptom. Feedback arrives fast, not months after the damage is done. Resources flow to the 20% of work that produces 80% of the outcomes.

At the organizational level: no institutional knowledge is lost when someone leaves. Every team member is rowing with the same principles. Priorities are computed, not felt. Debt — technical, operational, strategic — is made visible before it becomes critical.

Now extend this to a nonprofit running on volunteers with no paid staff, or a large residential community governed by a small elected volunteer board making decisions that affect many families. These are exactly the contexts where principled decision-making matters most — and where it is most absent, because the people doing the work are stretched thin, uncompensated, and operating without professional organizational infrastructure.

What if that constraint were removed?

Why AI Agents Are the Missing Piece

AI agents — not AI chatbots, not AI autocomplete, but genuinely agentic systems that take actions, maintain state, monitor progress, and operate continuously — are the first technology capable of applying the Wisdom Stack reliably at scale.

Consider what an agent can do that a human advisor cannot:

Relentless consistency. An agent does not get tired. It does not have a bad day. It does not skip the postmortem because the meeting ran long. It applies the principles on the first decision and the ten-thousandth with equal rigor.

Unlimited working memory. An agent can hold every open task, every past decision, every audit trail, and every defined success metric in context simultaneously. It can check current actions against all of them before proceeding.

Proactive rather than reactive. A human consultant applies principles when consulted. An agent monitors continuously and intervenes before drift becomes failure — the equivalent of a Chesterton’s Fence guardian who is always watching.

Compounding learning. An agent that logs its mistakes, mines them nightly, and promotes lessons into its own operating rules gets structurally better over time without requiring retraining. This is Kaizen implemented in software.

Separation of emotion and analysis. Agents don’t get attached to their previous decisions. Sunk cost is not in their architecture. When evidence says change course, they change course.

The agent is not replacing human judgment. It is doing what the best institutional systems in history have always done: making consistent application of good principles structurally mandatory, not personally optional. But instead of an org chart and a policy manual, it is code and continuous operation.

A Living Proof of Concept: One Person, Three Agents, Three Contexts

This is not theoretical. I am currently running three AI agents built on OpenClaw, each serving a different context — and progressively embedding the full Wisdom Stack into all of them.

TitanBot is my personal agent. It handles research, writing, code, scheduling, and continuous task management for my work as a software researcher and developer. Root Cause Thinking is in its core identity file — with explicit red flags for symptom-patching language. Evidence-Based Investigation is a formal skill it loads when debugging. Audit trails are maintained in daily memory files, Gitea tickets, git commits, and a lessons-learned log. Every heartbeat cycle checks active tasks against defined success criteria. A nightly lesson-mining cron scans session logs for corrections, extracts patterns, and promotes them into persistent rules.

A nonprofit board agent serves a nonprofit organization. Nonprofits operate under a particular kind of pressure: high stakes, limited staff, volunteer-driven governance, and almost no margin for the kind of organizational drift that well-funded institutions can absorb. The agent applies the Wisdom Stack to the nonprofit’s operations — maintaining institutional memory across leadership transitions, enforcing evidence-based decision-making before commitments are made, and ensuring that every significant action leaves an audit trail that survives personnel changes. Principles that a professional management team might apply naturally become structurally enforced, even when the people doing the work are stretched thin.

A local community agent serves a residential community governed by an elected volunteer board. Community governance is one of the hardest contexts for principled operation: decisions affect many people, board composition changes regularly, and institutional memory is fragile. The agent focuses on continuity and consistency — preserving the rationale behind past decisions, surfacing relevant precedents before new ones are made, and applying Chesterton’s Fence when long-standing policies are proposed for change. The result is governance that is less dependent on any single person’s knowledge or judgment.

Across all three, the Flywheel is the same: better audit trails → faster debugging and decision-making → fewer failures → more trust → more delegation → better agents → better audit trails.

What I have found most striking is not that individual principles help in isolation. It is that the combination, systematically embedded, produces emergent coherence. Each agent begins to feel less like a tool and more like a genuinely principled collaborator — one that challenges its own outputs, flags when it is speculating, and finishes what it starts instead of chasing the next interesting problem.

These are not LLM capabilities. They are principles, operationalized.

The Opportunity Is Broader Than You Think

Most people frame AI agents as productivity tools for individuals or efficiency tools for corporations. That framing is too narrow.

The deepest opportunity is deploying disciplined agents in contexts that have historically been unable to afford rigorous operation: nonprofits running on goodwill and overextended volunteers, community organizations where institutional memory lives in one person’s head, small teams doing important work without the organizational infrastructure to do it well.

These are the contexts where the Wisdom Stack would make the biggest difference — and where it has been most out of reach.

A small nonprofit can now have an agent that applies the same rigor as a Fortune 500 operations team. A community board can have an agent that maintains institutional memory across administrations. A solo researcher can have an agent that enforces the same epistemic discipline as the best peer-review processes.

The democratization of structured decision-making is not a side effect of the AI moment. It is the main event — if we are deliberate about building it.

Agent Skills: The Perfect Vehicle for Encoding and Sharing Principles

Here is the insight that changes everything about how we should think about building principled agents: principles don’t have to be re-invented for every agent from scratch. They can be encoded, packaged, and shared as reusable agent skills.

An agent skill is a modular, self-contained instruction set that an agent loads when a particular type of task arises. Skills define not just what to do, but how to reason — the epistemic standards, the verification steps, the audit requirements, the failure modes to avoid. Done well, a skill is a principle made executable.

Consider what this means in practice:

Evidence-Based Investigation can be a skill: when an agent is debugging a failure, it loads a structured protocol — gather logs first, form hypotheses second, never assert absence without exhaustive search, trace to root cause before proposing a fix. Any agent, anywhere, loads this skill and instantly operates with the discipline of a trained investigator.

Inversion Thinking can be a skill: before any significant plan is proposed, the agent runs a structured pre-mortem — enumerate the top three failure modes, check whether the current plan triggers any of them, and force an explicit answer before proceeding.

Audit Trail Maintenance can be a skill: every significant action is logged to a durable, queryable record — memory files, git commits, ticketing systems — automatically, without relying on the agent or human to remember.

Skills are the mechanism that makes the Wisdom Stack portable and composable. Instead of one person laboriously embedding principles into one agent’s identity file, a community of builders can develop a shared library of principled skills — each one battle-tested, each one encoding lessons learned from real failures, each one available to any agent that needs it.

This is the flywheel applied to principle propagation itself. One person encodes Evidence-Based Investigation into a skill. Another refines it. A third adapts it for a nonprofit governance context. A fourth extends it for community decision-making. Over time, the skill library becomes a living codification of the Wisdom Stack — not in a book that people read and forget, but in executable form that agents load and apply.

The Path Forward

We are at an inflection point. The principles exist. The technology to apply them systematically exists. The skill architecture to make them portable and composable exists. What is missing is the deliberate work of building the library — translating the Wisdom Stack, one principle at a time, into skills that any agent can load and immediately enforce.

This is not a solo project. It is an open invitation. Every principle you know, every lesson you have learned the hard way, every protocol that prevented a failure — these are candidates for a skill. Build it. Share it. Let it compound.

The opportunity is not just for engineers. Any individual, nonprofit, or community organization can begin: identify which principles you most reliably know but don’t practice, then build a skill that makes skipping them structurally costly.

Start with the first-principles gate. Before any significant decision, require an explicit answer to: what is the bedrock truth here, and am I reasoning from it or from inherited assumption?

Then add inversion. Then audit trails. Then success metrics. Stack the layers. Watch the flywheel turn.

We have spent five thousand years figuring out how to think well. For the first time in history, we have a technology that can actually enforce it — not just for the privileged few with access to elite advisors and professional infrastructure, but for anyone willing to build the skills that embody the principles they already know.

The principles are not new. The agents are not magic. What is new is the combination: timeless wisdom, encoded as executable skills, running continuously in agents that never tire, never forget, and never skip the step that mattered most.

Build the skills. Share them. Let them compound.

I am building a personal agent, a nonprofit board agent, and a local community agent on OpenClaw, and actively developing the skills that encode the Wisdom Stack across all three. If you are doing similar work — embedding principled reasoning into agents for individuals, nonprofits, or communities — I would love to compare notes and co-develop skills. The Wisdom Stack is a draft, not a dogma, and it gets better with more contributors.